ChatGPT帮我写了一个AEB程序

大家好,我是李慢慢。

最近有机会试用了下国外的新技术。

结合自己的本职工作,做了一点探索,这里来把结果跟大家分享一下。我主要目的是想试下,chatGPT能否帮助我工作,因此先提问了一些简单的基础的问题,又问了些很刁钻的,最后直接让它写代码。结果还是很满意的。

过程如下。

问题:你能解释一下什么是汽车AEB功能吗?

问题:你推荐AEB使用哪种传感器方案呢?

问题:再问一遍,你推荐AEB使用哪种传感器方案呢?

问题:有哪些比较推荐的传感器搭配方案?

问题:1R1V的配置方案下,你知道如何将两种传感器的数据进行融合,来计算TTC吗?

问题:目前AEB系统中最常用的传感器融合算法是什么?

问题:AEB经常发生误触发,你有什么建议吗?

好了,通过以上问题,我确定chatGPT已经很了解AEB了,然后我准备开始引导它写一些程序来实现这个功能。

为了方便测试,我准备拿一个开源的仿真软件Carla来进行验证,所以,首先我得问问它知不知道这个东西。

问题:你知道Carla仿真模拟器吗?

确定chatGPT知道Carla后,我尝试根据上面的聊天内容,让它设计一个针对1R1V布置方案的AEB程序,输入信号从Carla中拿,输出信号继续控制Carla中的主车。

问题:你能针对一个Radar和一个Camera的方案,你能用python脚本在Carla中创建出来主车和目标物吗?

import carlaimport numpy as npimport cv2# Connect to the Carla simulatorclient = carla.Client('localhost', 2000)client.set_timeout(5.0)world = client.get_world()# Spawn the ego vehicleego_bp = world.get_blueprint_library().find('vehicle.audi.etron')ego_transform = carla.Transform(carla.Location(x=5.0, y=5.0, z=2.0), carla.Rotation(yaw=0.0))ego_vehicle = world.spawn_actor(ego_bp, ego_transform)# Add a camera sensor to the ego vehiclecam_bp = world.get_blueprint_library().find('sensor.camera.rgb')cam_bp.set_attribute('image_size_x', '640')cam_bp.set_attribute('image_size_y', '480')cam_bp.set_attribute('fov', '90')cam_transform = carla.Transform(carla.Location(x=1.5, z=2.4))cam_sensor = world.spawn_actor(cam_bp, cam_transform, attach_to=ego_vehicle)# Add a radar sensor to the ego vehicleradar_bp = world.get_blueprint_library().find('sensor.other.radar')radar_bp.set_attribute('horizontal_fov', '30')radar_bp.set_attribute('vertical_fov', '10')radar_bp.set_attribute('points_per_second', '12000')radar_bp.set_attribute('range', '100')radar_transform = carla.Transform(carla.Location(x=0.8, z=1.0))radar_sensor = world.spawn_actor(radar_bp, radar_transform, attach_to=ego_vehicle)# Define a function to process the radar datadef process_radar_data(data):# Convert the radar data to a NumPy arrayradar_data = np.frombuffer(data.raw_data, dtype=np.dtype('f4'))radar_data = np.reshape(radar_data, (len(data), 4))# Filter the radar data to remove noiseradar_data = radar_data[radar_data[:, 3] > 0.1]# Calculate the time-to-collision for each detected objectttc_list = []for obj in radar_data:obj_pos = carla.Location(x=obj[0], y=obj[1], z=obj[2])obj_dist = obj_pos.distance(ego_vehicle.get_location())obj_speed = obj[3]if obj_speed > 0:ttc = obj_dist / obj_speedttc_list.append(ttc)# If there are any objects with a low TTC, trigger the AEB systemif len(ttc_list) > 0 and min(ttc_list) < 2.0:print("AEB triggered!")# Define a function to process the camera datadef process_camera_data(data):# Convert the camera data to a NumPy arrayimg = np.frombuffer(data.raw_data, dtype=np.uint8)img = np.reshape(img, (data.height, data.width, 4))img = img[:, :, :3]# Apply object detection to the camera image# ...# Register the radar and camera sensors with the simulatorradar_sensor.listen(process_radar_data)cam_sensor.listen(process_camera_data)# Start the simulationworld.tick()# Stop the simulation and cleanupradar_sensor.stop()cam_sensor.stop()ego_vehicle.destroy()

看到chatGPT一句一句打印出来这些脚本,我还是很震惊的,一是它真的能写代码,而且用的正是Carla提供的API。不过仔细阅读下代码,发现它只是简单的根据Radar探测到的数据进行了TTC计算,满足触发条件后给了个print信息而已。并没有用到Camera数据,也没有输出控制指令给到Carla中的主车。所以我继续发问。

问题:你没有综合考虑毫米波雷达和摄像头数据,而且你应该根据TTC计算结果将刹车控制应用到主车上。

import carlaRADAR_RANGE = 30.0 # Maximum radar range in metersCAMERA_FOV = 90.0 # Camera field of view in degreesBRAKE_THRESHOLD = 0.1 # Threshold for triggering the AEB systemdef calculate_ttc(vehicle, radar_data, camera_data):# Find the closest obstacle in the path of the vehicleclosest_obstacle = Noneclosest_obstacle_distance = float('inf')for obstacle in radar_data:distance = obstacle.depthif distance < closest_obstacle_distance:closest_obstacle = obstacleclosest_obstacle_distance = distance# Calculate TTC based on closest obstaclettc = closest_obstacle_distance / closest_obstacle.relative_velocity.x# Use camera data to check if closest obstacle is within camera FOVclosest_obstacle_in_fov = Falsefor detection in camera_data:if detection.bounding_box.contains(closest_obstacle.transform.location):closest_obstacle_in_fov = Truebreak# If closest obstacle is in FOV and TTC is below threshold, trigger AEBif closest_obstacle_in_fov and ttc < BRAKE_THRESHOLD:vehicle.apply_control(carla.VehicleControl(brake=1.0))else:vehicle.apply_control(carla.VehicleControl(throttle=1.0))# Connect to Carla simulatorclient = carla.Client('localhost', 2000)client.set_timeout(10.0)world = client.get_world()# Spawn ego carego_bp = world.get_blueprint_library().find('vehicle.tesla.model3')ego_transform = carla.Transform(carla.Location(x=30.0, y=0.0, z=0.0), carla.Rotation(yaw=180.0))ego_vehicle = world.spawn_actor(ego_bp, ego_transform)# Spawn other obstaclesobstacle_bp = world.get_blueprint_library().find('vehicle.audi.etron')obstacle_transform = carla.Transform(carla.Location(x=50.0, y=-5.0, z=0.0))obstacle_vehicle = world.spawn_actor(obstacle_bp, obstacle_transform)obstacle_vehicle.set_velocity(carla.Vector3D(x=-10.0, y=0.0, z=0.0))# Create radar sensorradar_bp = world.get_blueprint_library().find('sensor.other.radar')radar_bp.set_attribute('range', f'{RADAR_RANGE}')radar_transform = carla.Transform(carla.Location(x=2.5, z=0.0))radar_sensor = world.spawn_actor(radar_bp, radar_transform, attach_to=ego_vehicle)# Create camera sensorcamera_bp = world.get_blueprint_library().find('sensor.camera.rgb')camera_bp.set_attribute('fov', f'{CAMERA_FOV}')camera_transform = carla.Transform(carla.Location(x=2.5, y=0.0, z=1.5))camera_sensor = world.spawn_actor(camera_bp, camera_transform, attach_to=ego_vehicle)# Define function to handle sensor datadef sensor_callback(radar_data, camera_data):calculate_ttc(ego_vehicle, radar_data, camera_data)# Register callback for sensor dataradar_sensor.listen(lambda data: sensor_callback(data, camera_sensor.get_transform().transform(camera_sensor.get_unfiltered_image())))# Keep script running to receive sensor datawhile True:world.tick()

这一次感觉它get到了我意思,而且在这一个程序里将所有我的所有要求满足了,只是还有很多细节需要深入而已。但我依然想继续挑战它。因为我想到实车上AEB算法其实是运行在域控里,所以我希望chatGPT能将AEB算法程序单独拎出来。这个AEB算法的输入是传感器数据,输出是驾驶控制指令。所以我再次向它提问。

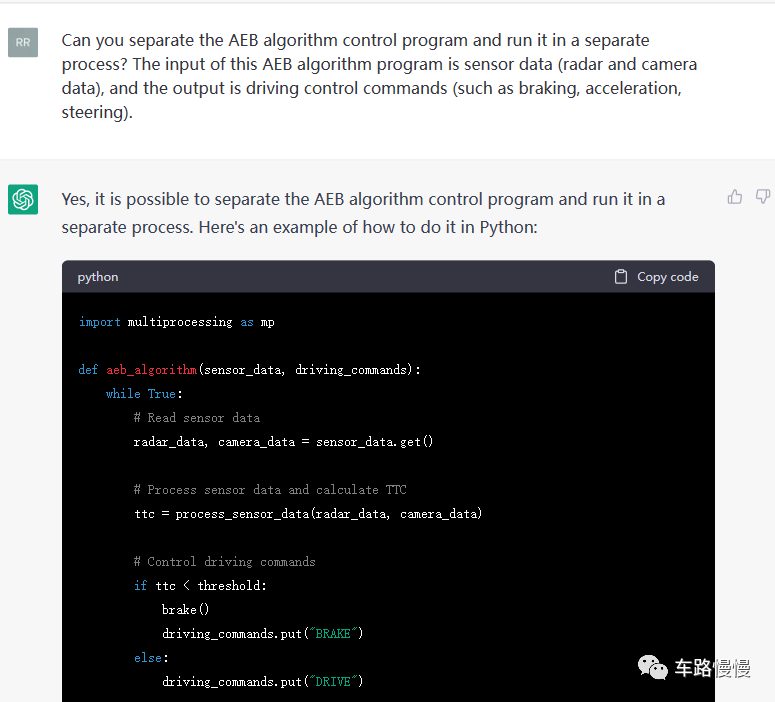

问题:你能把AEB算法控制程序分离出来,单独用一个进程来运行吗?这个AEB算法程序的输入是传感器数据(毫米波雷达和摄像头数据),输出是驾驶控制指令(如制动、加速、转向)。

完整代码如下:

import multiprocessing as mpdef aeb_algorithm(sensor_data, driving_commands):while True:# Read sensor dataradar_data, camera_data = sensor_data.get()# Process sensor data and calculate TTCttc = process_sensor_data(radar_data, camera_data)# Control driving commandsif ttc < threshold:brake()driving_commands.put("BRAKE")else:driving_commands.put("DRIVE")def brake():# Code to apply brakesif __name__ == "__main__":# Create sensor data queuesensor_data = mp.Queue()# Create driving commands queuedriving_commands = mp.Queue()# Start AEB algorithm processp = mp.Process(target=aeb_algorithm, args=(sensor_data, driving_commands))p.start()while True:# Read sensor data from Carla simulator and put it in the sensor data queueradar_data, camera_data = read_sensor_data_from_carla()sensor_data.put((radar_data, camera_data))# Read driving commands from the driving commands queue and control the ego carcommand = driving_commands.get()if command == "BRAKE":brake()else:drive()# Cleanupp.terminate()

我大概理了理它这个框架,如下:

理了理这个代码,感觉它真的懂了我的意思,它新建了两个队列,并在aeb算法中从一个队列中拿传感器数据,向另一个队列中放控制指令。逻辑很畅通,我感觉这个框架是非常不错的。

其实关于信号交互,有很多手段,比如套接字、管道、共享内存等,然后我就顺手问了下它,此处为何选择了用队列来通信,而不是其它?

问题:进程间的通信,你为什么选择了队列,而不是其它,比如套接字、共享内存、管道?

其实我还做了很多尝试性提问:

比如同一个问题不断地提问,它每次回答都会有所改变,直到自己得到自己想要的答案。

也比如让chatGPT输出更完善的代码,但是貌似它的回答是受到字数限制的,因此无法大规模生成代码给我,但我觉得作为我的代码助手,它是绰绰有余的。

但更让人觉得惊喜的是,它很开放,总是能找到一些让自己都没想到的答案,比如上面代码中的队列通信,是我从前都不知道的。

chatGPT是能成为工作的好助手的,它能帮我,也能教我。

这次探索就先这样,至于更完整的代码的运行效果,我觉得是没问题的,只是需要自己再组装下代码而已,实现一个基础的AEB功能的demo程序及仿真效果。这个代码我准备后面加入到之前的本号立项的OpenSIL项目中去,欢迎大家持续关注。

最后的最后,希望国内的AI助手(如文言一心)能早日成熟,帮助我们提高工作效率,早点下班。

瑞斯拜。